Service Offering#

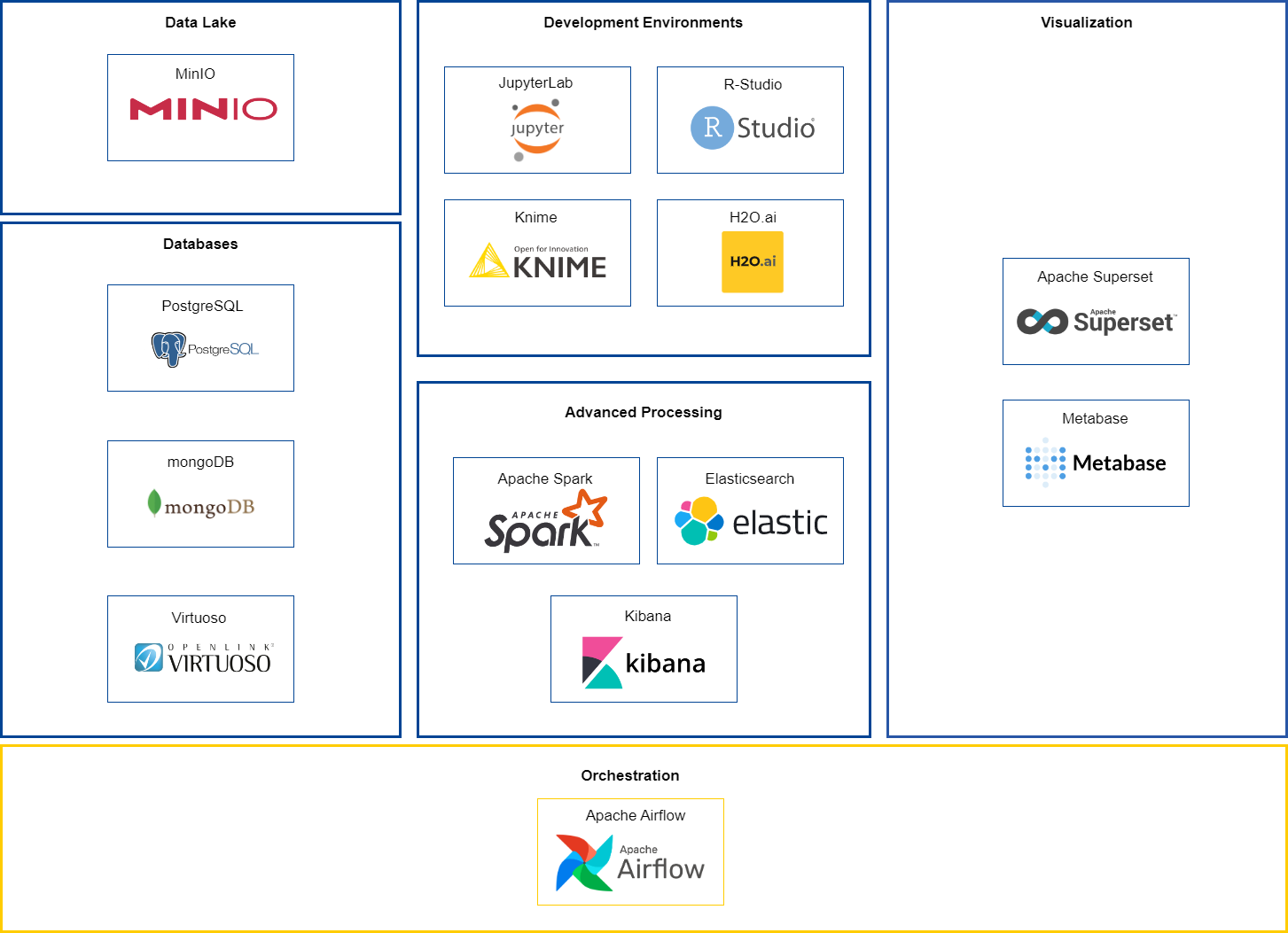

The service offering of BDTI consists of architectural components, which offer applications, virtual machines and/or databases to users in the context of analytics.

The architectural components are structured in the following categories:

- Databases

- Data Lake

- Development Environments

- Advanced Processing

- Visualization

- Orchestration

Databases#

Database solutions are made available to store data and perform queries on the stored data. BDTI currently includes a relational database (PostgreSQL), a document-oriented database (MongoDB) and a graph database (Virtuoso).

Component: PostgreSQL#

A PostgreSQL relational database is available to store data in a pre-defined structured format such that this data is made available for querying and analysis.

More information can be found here.

Component: MongoDB#

MongoDB, a document-oriented database, is available for storing JSON-like documents with optional schemas. MongoDB is a distributed database at its core, so it is high availability, scales horizontally, and geographic distribution are built in and easy to use.

More information can be found here.

Component: Virtuoso#

Virtuoso, a graph database, is available and can be used to collect data of every type, wherever it lives and use hyperlinks as super-keys for creating a change-sensistive and conceptually flexible web of linked data.

More information can be found here.

Data Lake#

A data lake solution is made available to store large amounts of both structured and raw unstructured data. The raw unstructured data can be further processed by deployed configurations of other building blocks (BDTI components) and next be stored in a more structured format within the data lake solution.

Component: MinIO#

MinIO, a high performance kubernetes native object storage, is made available where users can store unstructured data such as photos, videos, log files and backups.

More information can be found here.

Development Environments#

The development environments provide the computing capabilities and the tools required to perform standard data analytics activities on data that come from external data sources such as data lakes and datababes.

Component: JupyterLab#

JupyterLab is a web-based interactive development environment for Jupyter notebooks, code, and data.

More information can be found here.

Component: Rstudio#

RStudio is an Integrated Development Environment (IDE) for R, a programming language for statistical computing and graphics.

More information can be found here.

Component: KNIME#

KNIME is an open source data analytics, reporting and integration platform. KNIME integrates various components for machine learning and data mining, and can be used for your entire data science life cycle.

More information can be found here.

Component: H2O.ai#

H2O.ai is an open source machine learning and artificial intelligence platform designed to simplify and accelerate making, operating and innovating with ML and AI in any environment.

More information can be found here.

Advanced Processing#

Clusters and tools can be set up for processing large volumes of data and performing real-time search operatons.

Component: Apache Spark#

Apache Spark is available and can be used for implementing Spark clusters which are compute clusters with big data applications that are able to perform distributed processing of large volumes of data.

More information can be found here.

Component: Elasticsearch#

Elasticsearch is a distributed search and analytics engine for performing real-time search for a wide variety of use cases such as storing and analyzing logs, managing and integrating spatial information, and many more.

More information can be found here.

Component: Kibana#

Kibana is your window into the Elastic Stack. Specifically, it is a browser-based analytics and search dashboard for Elasticsearch.

More information can be found here.

Visualization#

A data visualization application is made available for the representation of information in the form of common graphics such as charts, diagrams, plots, infographics, and even animations.

Component: Apache Superset#

Apache Superset is an open source modern data exploration and visualization platform able to handle data at petabyte scale (big data).

More information can be found here.

Component: Metabase#

Metabase sets up in five minutes, connecting to your database, and bringing its data to life in beautiful visualizations. An intuitive interface makes data exploration feel like second nature—opening data up for everyone, not just analysts and developers.

More information can be found here.

Orchestration#

A data orchestration application is made available for automation of the data-driven processes from end-to-end, including preparing data, making decisions based on that data, and taking actions based on those decisions. The data orchestration process can span across many different systems and types of data.

Component: Apache Airflow#

Apache Airflow is an open source workflow management platform that allows you to easily schedule and run your complex data pipelines.

More information can be found here.